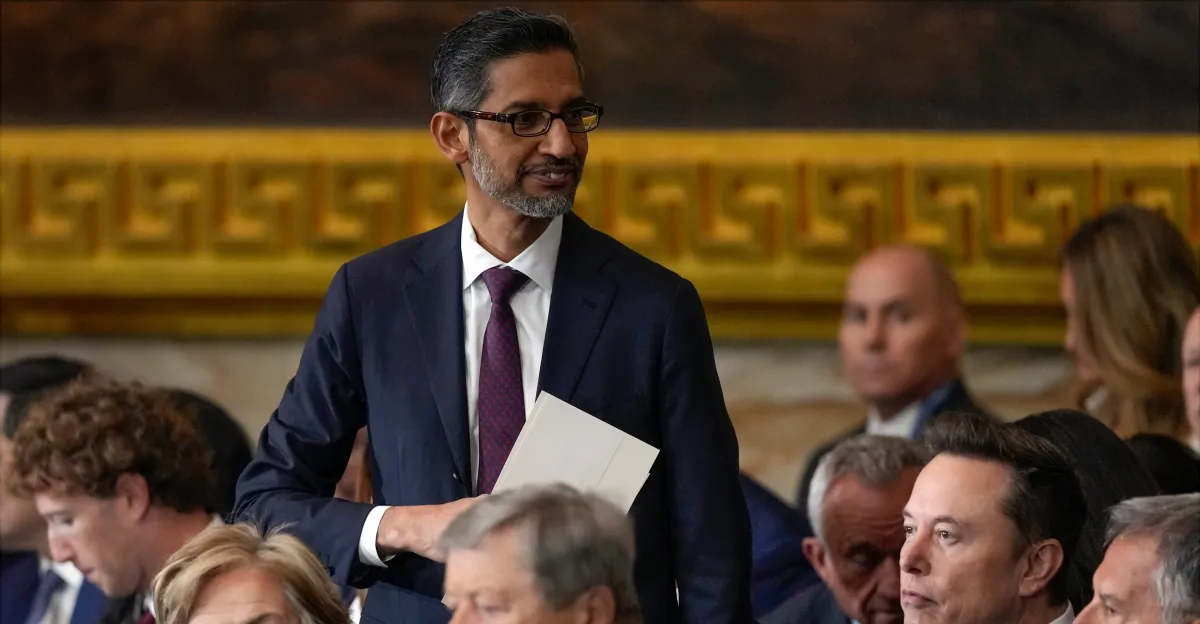

A group of Google employees has formally voiced concern over the company’s reported negotiations with the U.S. Department of Defence (DoD), warning against deploying its flagship Gemini artificial intelligence model in classified military environments. The letter, addressed to CEO Sundar Pichai and signed by staff from divisions including Google DeepMind and Google Cloud, reflects a widening internal debate over how far the company should go in supporting national security initiatives.

While the exact number of signatories remains unclear, the cross-functional nature of the group signals that the issue cuts across both research and commercial arms of the company—an unusual alignment that underscores the seriousness of the concern.

Why Gemini’s Defence Deployment Is Contentious

At the center of the debate is Gemini, Google’s most advanced multimodal AI system, designed to handle complex reasoning tasks across text, images, and code. Its potential deployment in classified settings raises questions about how such systems could be used—ranging from intelligence analysis to operational decision-making.

Employees argue that the lack of public clarity around use cases, safeguards, and oversight mechanisms creates significant ethical ambiguity. In high-stakes environments like defence, even small model errors or biases could have outsized consequences, including flawed intelligence assessments or unintended escalation risks.

A Familiar Flashpoint: Big Tech and Military Contracts

This is not the first time Google has faced internal resistance over defence-related work. The company previously encountered backlash over Project Maven in 2018, a Pentagon initiative involving AI-powered drone imagery analysis, which ultimately led to Google stepping back from the contract.

Since then, however, the geopolitical landscape has shifted. Governments—particularly in the U.S. and its allies—have accelerated efforts to integrate advanced AI into defence infrastructure, citing competition with China and other strategic rivals. For companies like Google, this creates both opportunity and tension: lucrative contracts on one side, and employee and public scrutiny on the other.

Strategic Stakes: AI, Security, and Market Position

From a business perspective, deeper engagement with defence agencies could strengthen Google Cloud’s position in a highly competitive market dominated by AWS and Microsoft Azure, both of which already maintain significant government contracts. AI capabilities like Gemini could become a key differentiator in securing long-term federal deals.

However, such moves also carry reputational risks. Trust—both among users and employees—remains a critical asset for AI companies. Internal dissent, if it grows, could affect talent retention, particularly among researchers who prioritize ethical considerations in AI development.

The Governance Gap: Regulation Still Catching Up

The episode also highlights a broader issue: the regulatory vacuum surrounding AI use in military contexts. While frameworks for civilian AI governance are gradually emerging across regions like the EU and the U.S., clear global standards for defence applications remain limited.

Experts argue that without transparent guidelines, decisions about deploying powerful AI systems in sensitive environments are effectively left to corporations and governments, with minimal external oversight. This raises concerns about accountability, especially in classified settings where public scrutiny is inherently restricted.

What Comes Next

Google has not publicly detailed the scope or status of its discussions with the DoD, and it remains unclear whether the internal pushback will influence final decisions. However, the letter marks an important moment in the evolving relationship between Big Tech and the military.