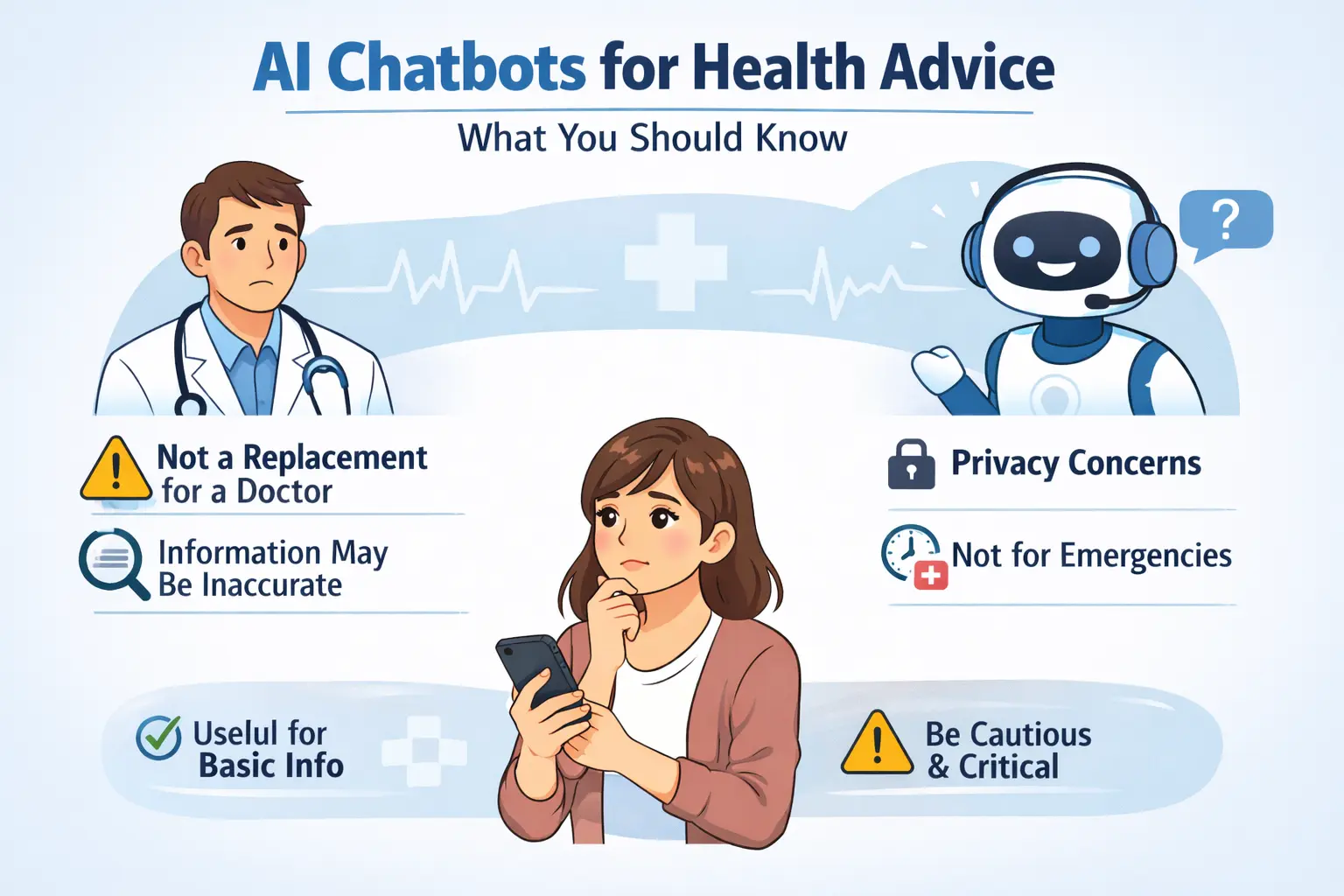

Artificial intelligence chatbots are rapidly becoming a go-to source for people seeking quick answers to medical questions. From understanding symptoms to exploring treatment options, users are increasingly turning to AI-powered tools instead of traditional search engines. The appeal lies in instant responses, conversational explanations, and 24/7 accessibility.

However, health professionals caution that while AI can be helpful for general education, it is not designed to replace licensed medical practitioners.

AI Is Informational — Not Diagnostic

Medical experts emphasize that AI chatbots do not examine patients, run tests, or review complete medical histories. They generate responses based on patterns learned from large datasets, which may include publicly available medical literature and general health information.

Because of this limitation, AI tools may provide broad guidance but cannot deliver personalized diagnoses. Symptoms that appear minor could signal serious conditions, and only a qualified healthcare provider can make that determination.

Risk of Incomplete or Outdated Information

Although AI systems are trained on vast amounts of data, they may not always reflect the most recent medical guidelines or emerging research. Additionally, chatbots can occasionally produce inaccurate or misleading information presented in a confident tone.

Healthcare professionals warn that relying solely on AI advice may lead to delayed treatment, especially in urgent situations such as chest pain, breathing difficulties, or severe allergic reactions.

Privacy Concerns Around Sensitive Health Data

When users share personal health details with chatbots, they may unknowingly disclose sensitive information. Experts recommend reviewing privacy policies before entering identifiable medical data.

While many AI platforms claim to protect user data, policies vary across providers. Patients are encouraged to avoid sharing full names, addresses, or specific medical records unless they fully understand how the information will be stored and used.

When AI Can Be Helpful

Despite concerns, doctors acknowledge that AI chatbots can serve useful purposes. They can:

-

Explain complex medical terms in simple language

-

Provide general wellness tips

-

Offer guidance on preparing questions for a doctor’s visit

-

Help users understand medication instructions

Used responsibly, AI tools can empower patients to become more informed participants in their healthcare.

Red Flags: When to Seek Immediate Medical Care

Experts stress that AI should never be the sole source of advice during emergencies. Individuals experiencing severe pain, high fever in infants, stroke symptoms, suicidal thoughts, or other urgent conditions should contact emergency services or consult a healthcare professional immediately.

AI chatbots lack the ability to assess physical cues, emotional distress, or subtle warning signs that clinicians are trained to recognize.

A Complement, Not a Replacement

Healthcare leaders increasingly view AI chatbots as supplementary tools rather than substitutes for medical professionals. The safest approach, experts say, is to use AI for educational support while relying on qualified doctors for diagnosis and treatment decisions.

As artificial intelligence becomes further integrated into healthcare systems, regulators and medical institutions are working to establish clearer guidelines to ensure patient safety.