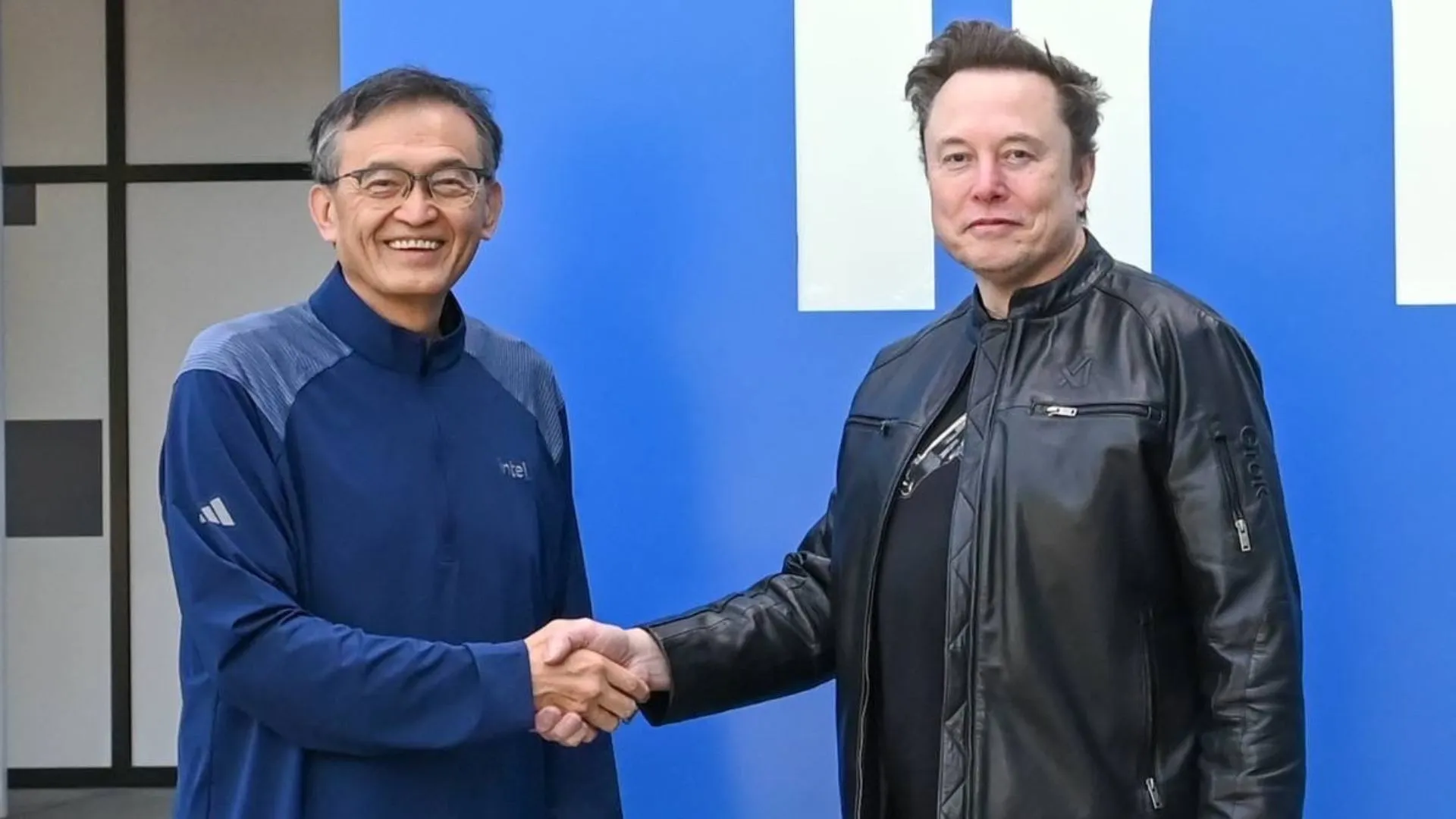

Intel’s reported entry into Elon Musk’s Terafab AI chip project marks a significant development in the rapidly intensifying global race for AI hardware dominance. As demand for specialized chips surges—driven by generative AI, robotics, and large-scale computing—this collaboration signals a shift toward vertically integrated AI ecosystems.

The partnership brings together Intel’s semiconductor manufacturing capabilities and Musk’s expanding AI ambitions, which span from autonomous systems to humanoid robotics and hyperscale infrastructure.

What Is the Terafab Project?

Terafab is understood to be an ambitious initiative focused on designing and producing next-generation AI chips optimized for two key domains:

- Humanoid robots, including systems like Tesla’s Optimus

- AI data centres, which require massive parallel processing power

Unlike general-purpose GPUs, these chips are expected to be highly specialized—tailored for real-time decision-making, energy efficiency, and scalable AI workloads.

This reflects a broader industry shift: moving away from one-size-fits-all processors toward domain-specific architectures.

Intel’s Role: Manufacturing Muscle Meets AI Ambition

Intel’s involvement likely centers on its foundry and advanced packaging services, an area where the company has been aggressively investing to compete with TSMC and Samsung.

Key contributions from Intel could include:

- Advanced node manufacturing (sub-5nm class, if confirmed)

- 3D chip stacking and packaging technologies

- Supply chain resilience for large-scale chip production

For Intel, this is more than just a partnership—it’s an opportunity to reposition itself as a critical enabler in the AI supply chain after years of lagging behind in the GPU-driven AI boom.

Why This Matters for the AI Industry

1. Direct Challenge to Nvidia’s Dominance

Nvidia currently leads the AI chip market with its GPUs powering most large language models and data centres. A Musk-backed alternative—supported by Intel—could disrupt that dominance by offering:

- Customized performance for specific AI workloads

- Potential cost efficiencies at scale

- Greater vertical integration

2. Rise of Custom AI Silicon

Tech giants are increasingly designing their own chips (Google TPU, Amazon Trainium, Apple Silicon). Terafab fits squarely into this trend, emphasizing:

- Control over hardware-software optimization

- Reduced reliance on third-party vendors

- Competitive differentiation in AI capabilities

Humanoid Robots: The Long-Term Play

One of the most intriguing aspects of the Terafab initiative is its focus on humanoid robots. These systems require:

- Low-latency processing for real-world interaction

- Energy-efficient computation for mobility

- On-device AI inference rather than cloud dependency

If successful, Terafab chips could become the backbone of next-generation robotics—enabling machines that operate autonomously in industrial, commercial, and even domestic environments.

Data Centres: Scaling AI Infrastructure

Beyond robotics, the collaboration targets the rapidly expanding AI data centre market. With AI models becoming larger and more compute-intensive, the need for efficient, scalable hardware is critical.

Terafab chips could offer:

- Optimized training and inference performance

- Reduced power consumption per workload

- Better integration with AI software stacks

This is particularly relevant as energy costs and sustainability become major constraints for hyperscale operators.

Risks and Open Questions

Despite its potential, several uncertainties remain:

- Execution risk: Designing competitive AI chips is complex and capital-intensive

- Time-to-market: Nvidia and others are not standing still

- Ecosystem support: Software compatibility and developer adoption will be crucial

Additionally, details about Terafab’s architecture, timelines, and deployment scale are still limited.

Expert Take: A High-Stakes Bet on Vertical AI Integration

From an industry perspective, this move reflects a deeper strategic shift: control over AI is increasingly tied to control over silicon. By aligning with Intel, Musk may be attempting to build an end-to-end AI stack—from chips to applications.

For Intel, the partnership offers a chance to reassert relevance in a market it helped create but partially ceded to competitors.

The Bottom Line

Intel joining the Terafab AI chip project underscores a pivotal moment in the evolution of AI infrastructure. As the lines blur between robotics, data centres, and custom silicon, collaborations like this could redefine how AI systems are built and deployed.

TECH TIMES NEWS

TECH TIMES NEWS