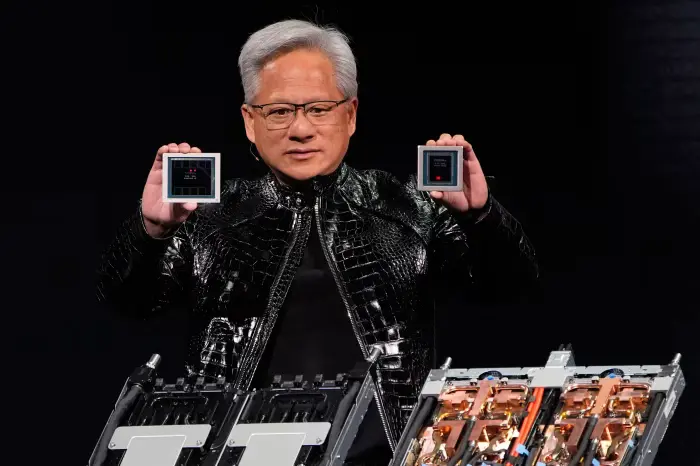

Nvidia has officially unveiled a new artificial intelligence chip platform, signaling its intent to maintain leadership in the rapidly evolving AI hardware space. The announcement comes at a time when competition from both established semiconductor giants and emerging AI-focused startups is intensifying, putting pressure on Nvidia’s long-standing dominance in data center acceleration.

The company positioned the new platform as a foundational step toward supporting future AI workloads, including large language models, generative AI systems, and enterprise-scale inference deployments.

Designed for the Next Wave of AI Workloads

According to Nvidia, the newly introduced platform has been engineered to address the growing computational demands of modern AI models. These workloads increasingly require massive parallel processing, faster interconnects, and improved memory bandwidth to handle training and inference efficiently.

The platform reportedly integrates upgraded GPU architecture, advanced networking technologies, and optimized software support, aiming to deliver higher performance-per-watt while reducing operational complexity for data centers.

Rising Competition in the AI Chip Market

Nvidia’s announcement arrives amid growing competition from rivals such as AMD, Intel, and several cloud providers developing in-house AI accelerators. Companies like Google, Amazon, and Microsoft are increasingly investing in custom silicon to reduce reliance on third-party chips and control costs at scale.

At the same time, AI-focused startups are entering the market with specialized chips optimized for specific workloads, such as inference or edge AI, challenging Nvidia on both price and efficiency.

Focus on Software and Ecosystem Strength

Beyond raw hardware performance, Nvidia continues to emphasize its broader software ecosystem as a key differentiator. The new AI chip platform is expected to work closely with Nvidia’s CUDA framework, AI libraries, and enterprise tools, enabling developers to transition with minimal friction.

Industry analysts note that Nvidia’s tight integration of hardware and software remains one of its strongest competitive advantages, particularly for enterprises that prioritize stability and long-term support.

Strategic Importance for Data Centers and Enterprises

The new platform is aimed primarily at hyperscale data centers, research institutions, and large enterprises deploying AI at scale. With AI adoption accelerating across industries such as healthcare, finance, automotive, and manufacturing, demand for powerful and efficient AI chips continues to rise.

Nvidia’s latest move suggests the company is preparing for sustained demand while anticipating shifts in how AI infrastructure is built and deployed over the coming years.

What This Means for the AI Industry

Nvidia’s unveiling highlights how critical AI chips have become in shaping the future of computing. As competition grows fiercer, innovation cycles are shortening, and customers are gaining more options than ever before.

TECH TIMES NEWS

TECH TIMES NEWS