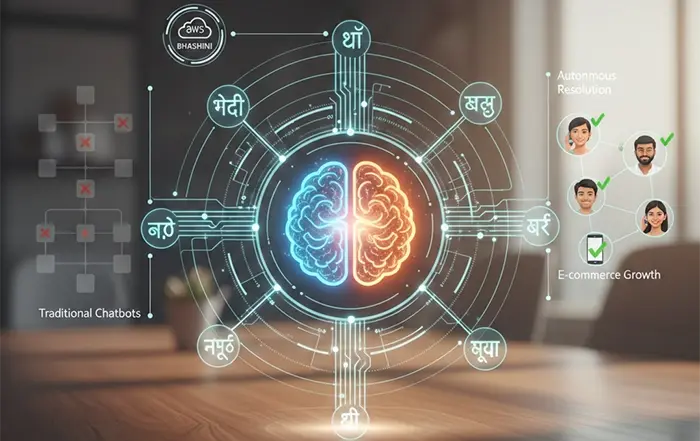

Amazon Web Services (AWS) has entered into a collaboration with SHI India, a leading IT solutions and services provider, to accelerate the development of indigenous artificial intelligence (AI) models. The partnership is designed to address India-specific challenges by enabling organizations to build, train, and deploy AI systems that are more aligned with local languages, datasets, and regulatory requirements.

At its core, the initiative reflects a broader shift toward AI localization, where global cloud providers are increasingly working with regional players to create solutions tailored for domestic markets rather than relying solely on generalized global models.

Why Indigenous AI Models Matter Now

India’s AI adoption is growing rapidly across sectors such as finance, healthcare, agriculture, and governance. However, most large-scale AI models today are trained on datasets that are heavily skewed toward Western contexts. This creates gaps in accuracy, cultural relevance, and language understanding when deployed in India.

By focusing on indigenous model development, AWS and SHI India aim to:

- Improve performance for Indian languages and dialects

- Ensure compliance with emerging data governance norms

- Reduce reliance on external AI ecosystems

- Enable sector-specific customization for enterprises

This approach aligns closely with India’s ongoing push for digital sovereignty and self-reliance in critical technologies.

What AWS Brings to the Table

AWS contributes its robust cloud infrastructure, including advanced AI and machine learning services, high-performance computing capabilities, and scalable data processing tools. Platforms such as Amazon SageMaker and Bedrock (for generative AI) are expected to play a key role in enabling faster model development cycles.

The advantage here is not just compute power—it’s the ability to scale AI workloads efficiently while maintaining security and compliance, something that is critical for enterprise and government use cases.

SHI India’s Role: Local Expertise and Enterprise Reach

SHI India complements AWS with its strong on-ground presence, enterprise relationships, and understanding of regional market dynamics. The company is expected to help organizations:

- Identify practical AI use cases

- Integrate AI into existing IT systems

- Navigate deployment challenges

- Optimize cost and performance

This combination of global infrastructure + local execution capability is often where many AI initiatives either succeed or fail.

Focus Areas: From Startups to Public Sector

The collaboration is expected to support a wide range of stakeholders:

- Startups: Faster access to AI infrastructure and tools without heavy upfront investment

- Enterprises: Custom AI models for automation, analytics, and customer experience

- Public sector: AI applications in governance, smart cities, and citizen services

Particularly in India, where multilingual support and large-scale population data are critical, localized AI models could unlock entirely new categories of applications.

Industry Context: A Growing Race for AI Localization

This move comes at a time when global tech companies are increasingly investing in region-specific AI ecosystems. Governments worldwide are also emphasizing local data processing and AI independence.

For AWS, this collaboration strengthens its positioning in one of the fastest-growing cloud markets globally. For India, it represents another step toward building a self-sustaining AI innovation pipeline.

Expert Insight: Opportunity and Challenges Ahead

While the collaboration has clear strategic value, execution will determine its long-term impact. Building high-quality indigenous AI models requires:

- Access to diverse and clean datasets

- Strong governance frameworks

- Skilled AI talent

- Continuous model evaluation and updates

If these elements are addressed effectively, the partnership could set a benchmark for how global-local collaborations can drive meaningful AI innovation.

The Takeaway

The AWS–SHI India collaboration is more than just a business partnership—it reflects a structural shift in how AI is being developed and deployed. Instead of one-size-fits-all models, the future is moving toward context-aware, locally optimized AI systems.

TECH TIMES NEWS

TECH TIMES NEWS